The conversation around social media addiction has been building for years, but a recent landmark verdict has pushed it into an entirely new phase. What once felt like a personal struggle or a parenting concern is now being examined in courtrooms, with real legal consequences for some of the world’s biggest tech companies.

This verdict is not just about one case or one company. It signals a broader turning point in how society views responsibility in the digital age. For years, platforms have been designed to keep users engaged for as long as possible. Features like endless scrolling, push notifications, and algorithm-driven content have become the norm. Yet now, those same features are being questioned in a legal context, especially when it comes to their impact on mental health.

On one hand, social media has transformed how we connect, learn, and share our lives. It has created opportunities that did not exist before. On the other hand, there is growing evidence that excessive use can lead to anxiety, depression, and behavioral dependency, particularly among younger users. The gap between intent and impact has never been clearer.

Tech companies often argue that their platforms are tools, and users ultimately decide how they engage with them. However, critics point out that these tools are carefully engineered to capture attention in ways that can feel almost impossible to resist. So, at what point does engagement become exploitation?

At the same time, society is becoming more aware of mental health, digital well-being, and the long-term effects of constant connectivity. Parents, educators, and even former tech insiders are speaking out. They are asking whether the systems we rely on every day are truly serving us, or shaping our behavior in ways we do not fully understand.

As this legal battle unfolds, it reveals how we are collectively trying to make sense of a technology that has outpaced our ability to regulate it. In many ways, we are still catching up.

The Case That Sparked a Bigger Conversation

The lawsuit emerged from years of growing concern about how social media platforms affect mental health, particularly among teenagers and young adults. Families, advocacy groups, and researchers have been raising alarms for quite some time. However, this case brought those concerns into a legal framework that demanded accountability.

At the center of the case reported by the BBC were allegations that major tech companies knowingly designed their platforms in ways that could foster dependency. Plaintiffs argued that features such as algorithmic recommendations, infinite scrolling, and intermittent rewards were not accidental. Instead, they were intentional design choices meant to maximize user engagement, even if that came at a psychological cost.

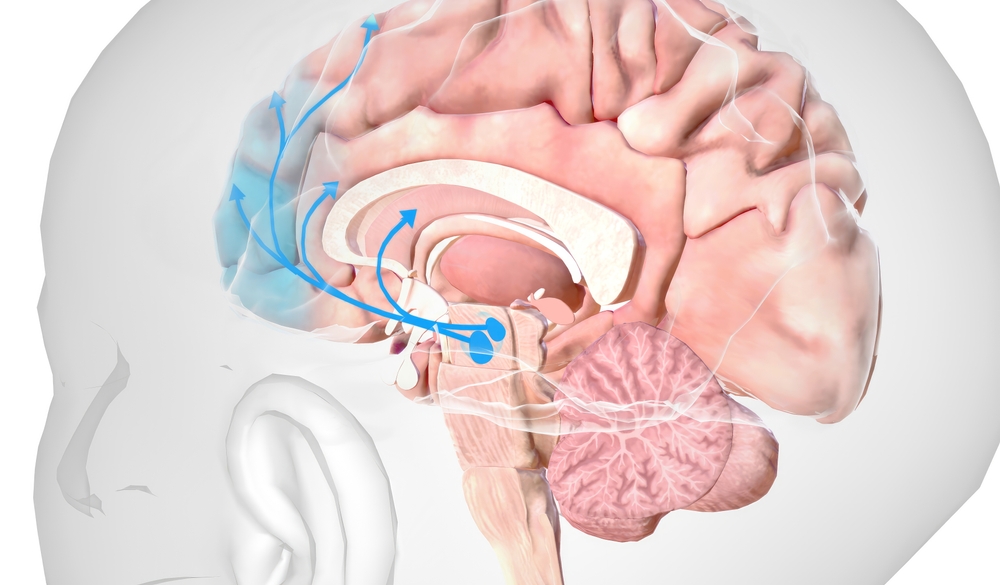

This argument is rooted in a concept often discussed in behavioral science. When users receive unpredictable rewards, like viral likes or engaging content, the brain releases dopamine. Over time, this creates a feedback loop that encourages repeated use. While this mechanism can make platforms feel enjoyable, critics argue that it can also blur the line between habit and compulsion.

Tech companies, on the other hand, have pushed back strongly against these claims. They argue that their platforms are meant to connect people and provide value. According to their position, users have control over how they interact with these tools. Features such as screen time limits, content filters, and parental controls are often cited as evidence that companies are taking steps to promote healthier usage.

The verdict suggests that this argument may not be enough. By allowing the case to move forward or by holding companies partially accountable, the court acknowledged that design choices matter. It recognized that the way a platform is built can influence behavior in ways that go beyond simple user choice.

What makes this case particularly impactful is how it reframes the issue. Instead of asking whether social media is harmful in general, it asks whether companies should be responsible for how their products are designed. Moreover, the case highlights how public perception is evolving. Just a decade ago, social media was largely seen as a positive force. Today, the conversation is far more complex. People are starting to question not just what these platforms do, but how and why they do it.

In that sense, the verdict is not just a legal milestone. It is a reflection of changing attitudes. It shows that society is beginning to take a closer look at the systems that shape daily life, and to ask whether those systems are aligned with our well-being.

The Science Behind Digital Dependency

The idea of social media addiction is nothing new. It is rooted in well-established psychological principles that explain how habits form and why some behaviors become difficult to control. It all comes down to the brain’s reward system. When people engage with social media, especially when they receive likes, comments, or shares, the brain releases dopamine. This chemical is often associated with pleasure and motivation. Over time, the brain starts to link social media use with reward, which encourages repeated behavior.

However, it is not just the presence of rewards that matters. It is the unpredictability of those rewards. Sometimes a post gains a lot of attention, and other times it does not. That uncertainty creates a pattern similar to what is seen in gambling behavior. The brain keeps coming back, hoping for the next reward.

This is where design becomes important. Features like infinite scrolling remove natural stopping points. Notifications pull users back into the app, even when they are trying to focus on something else. Algorithmic feeds prioritize content that is most likely to keep people engaged, not necessarily what is most meaningful or healthy.

It is also important to recognize that not everyone experiences these effects in the same way. Some users can engage with social media without issue. Others may find themselves spending more time than they intended, feeling anxious when they are offline, or struggling to disconnect.

Researchers have also found links between heavy social media use and mental health challenges. These include increased anxiety, disrupted sleep, and lower self-esteem. Teenagers appear to be particularly vulnerable, as their brains are still developing and they are more sensitive to social feedback.

Social media is not inherently harmful for everyone. It can provide support, connection, and access to information. The issue arises when the design of these platforms encourages patterns of use that are difficult to regulate.

From User Choice to Platform Responsibility

For years, tech companies have relied on a simple defense. Their platforms are tools, and users are responsible for how they use them. This frames social media as neutral, placing the burden on individuals rather than corporations. However, this verdict suggests that the legal landscape may be changing. Courts are beginning to examine not just how platforms are used, but how they are designed.

If design choices are proven to influence behavior in predictable ways, then companies may no longer be able to claim complete neutrality. Instead, they could be seen as active participants in shaping user behavior. This is similar to how other industries have been regulated. For example, companies that produce food, pharmaceuticals, or even automobiles are expected to consider safety in their design. They cannot simply say that misuse is entirely the consumer’s responsibility.

Applying that logic to tech raises questions. Social media platforms are not physical products, and their impact is harder to measure. However, the argument is gaining traction. If a company knows that certain features can lead to excessive use or harm, should it be required to change those features?

The verdict does not provide all the answers, but it opens the door for further legal challenges. It also signals to other courts that these arguments are worth considering. Over time, this could lead to new standards for how digital products are designed and regulated.

Companies are likely to push back. They may argue that increased regulation could limit innovation or restrict free expression. But one thing is clear. The conversation is no longer just about whether users should spend less time online. It is about whether platforms should be built differently in the first place.

Big Tech’s Design Playbook Under Scrutiny

As the legal pressure grows, more attention is being placed on the specific strategies that platforms use to keep users engaged. Many of these strategies are not new. In fact, they have been refined over years of research and experimentation. One of the most well-known techniques is the use of personalized algorithms. These systems analyze user behavior to determine what content is most likely to keep someone scrolling. While this can make the experience feel tailored and enjoyable, it also means that users are constantly being shown content designed to capture their attention.

Another key feature is the removal of friction. Actions like liking a post, sharing content, or watching the next video require little to no effort. This makes it easy for users to continue engaging without stopping to think about how much time has passed. Notifications also play a major role. By sending alerts at strategic moments, platforms can draw users back in throughout the day. Even small signals, like a red notification badge, can create a sense of urgency.

From a business perspective, these strategies make sense. More engagement often leads to more advertising revenue. However, the verdict suggests that these practices may now be viewed differently. Instead of being seen purely as smart design, they could be interpreted as mechanisms that encourage overuse. This does not mean that all engagement strategies are harmful. However, it does raise questions about where the line should be drawn.

Importantly, some former tech insiders have spoken out about these practices. They have described how platforms are designed with a deep understanding of human psychology. As a result, the spotlight is now firmly on how these products are built.

Stories Behind the Statistics

While legal arguments and scientific studies are important, they only tell part of the story. The real impact of social media is often felt on a personal level, and those experiences are what brought this issue into the headlines.

Many families involved in these cases have shared deeply emotional accounts. Some describe children who have become withdrawn or anxious after spending long hours online. Others talk about disrupted sleep patterns, declining academic performance, or struggles with self-image. These stories are powerful because they remind us that behind every statistic is a real person navigating a complex digital world.

It’s also important to approach these stories with care. Not every negative experience can be traced directly to social media. Life is influenced by many factors, including family dynamics, school environments, and broader societal pressures. This is where balance becomes essential. It means recognizing patterns while also understanding that individual experiences can vary.

Interestingly, many users also report positive experiences. Social media can provide a sense of community, especially for people who feel isolated in their offline lives. It can also be a space for creativity, learning, and self-expression.

Social media can be both helpful and harmful, depending on how it is used and how it is designed. Finding a way to preserve the benefits while reducing the risks is key.

What This Means for the Future of Regulation

Looking ahead, the implications of this verdict could extend far beyond a single case. If courts continue to take this approach, it may lead to new forms of regulation for the tech industry.

One possible outcome is increased transparency. Companies could be required to explain how their algorithms work or how certain features influence user behavior. This would allow both regulators and users to make more informed decisions.

Another possibility is the introduction of design standards. Just as other industries must meet safety requirements, tech companies might be expected to consider user well-being in their design processes. This could include limits on certain features or the introduction of more effective safeguards.

There is also the potential for age-specific protections. Given that younger users are more vulnerable, regulations could focus on creating safer digital environments for children and teenagers.

However, implementing these changes will not be straightforward. Technology evolves quickly, and regulations often struggle to keep pace. There is also the challenge of balancing protection with freedom, especially when it comes to issues like speech and access to information. Despite these challenges, the direction is becoming clearer. Governments, courts, and the public are all paying closer attention to how digital platforms operate.

Read More: Using Social Media is Causing Anxiety, Stress and Depression

A Turning Point in How We See Technology

For a long time, there was an assumption that innovation should move fast and that any negative consequences could be addressed later. Now, that mindset is being questioned. People are starting to ask whether progress should also include responsibility from the start. This does not mean rejecting technology. Instead, it means being more intentional about how it is created and used. It means recognizing that the tools we rely on every day can shape our behavior in subtle ways.

At the same time, this moment encourages users to think about their own habits and how they interact with digital platforms. While companies may play a role, individual awareness is still important. In many ways, this is a shared responsibility. It involves designers, policymakers, and users all working together to create a healthier digital environment.

The landmark verdict has opened the door to a new chapter in social media and responsibility. Perhaps the most important takeaway is that complexity cannot be ignored. Social media is not purely good or purely harmful. It exists in a space where benefits and risks are deeply intertwined.

By acknowledging that two truths can exist at once, we can move toward a more balanced understanding. We can recognize the value these platforms bring while also addressing the ways they may fall short.

As this conversation continues, the relationship between users and technology is evolving. And for the first time in a long time, it feels like we are beginning to shape that relationship more consciously.

A.I. Disclaimer: This article was created with AI assistance and edited by a human for accuracy and clarity.